As AI agents rapidly expand their ability to learn from human-generated content, creators and organizations face a fundamental challenge: How do we protect creative contribution without stopping innovation? Existing systems lack transparency around how content is learned, replicated, or transformed by AI. This uncertainty creates friction between creators, platforms, and emerging AI ecosystems. Sapience 6.1 was initiated as a collective framework to redefine how learning, contribution, and value can coexist in the AI era.

The project focuses on building practical structures rather than abstract discussions. We designed a strategic framework that enables: visibility into AI learning influence / measurable contribution tracking / collaborative response models across industries Sapience 6.1 positions itself as a neutral layer connecting creators, technology companies, and institutional stakeholders.

Sapience 6.1 represents a shift from passive AI adoption toward collective intelligence governance. Rather than resisting technological progress, the initiative introduces a structured way for creators, organizations, and AI systems to coexist — where contribution is visible, learning becomes transparent, and value can be shared more fairly. This framework is not an end state, but a foundation for the next stage of AI-driven collaboration.

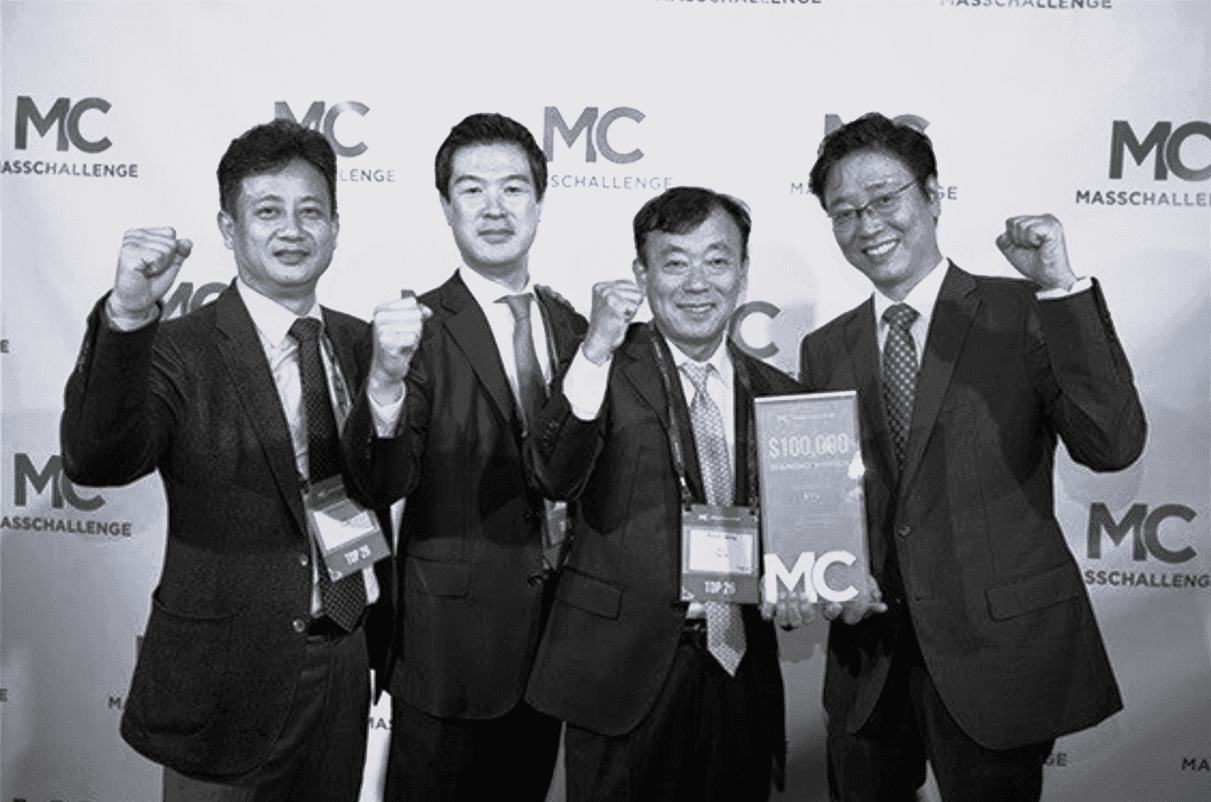

For LargeAct, Sapience 6.1 is more than a project — it is a long-term vision. As AI agents continue to evolve, we believe sustainable innovation depends on building systems where human creativity remains measurable, respected, and economically connected to technological growth.

Next projects.

(2019-26©)